1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

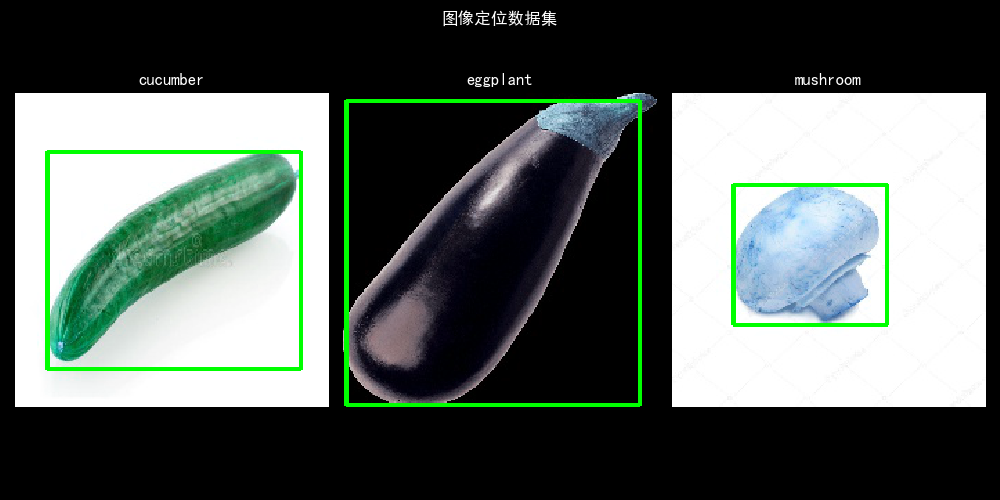

| class LocationDataSet(Dataset):

def __init__(self, root_dir, train=True, transform=None, input_dim=1):

"""

自定义数据集类,加载定位数据集

1. 训练部分,加载编码前50图像和标记数据

2. 测试部分,加载编码50之后图像和标记数据

:param root_dir:

:param train:

:param transform:

"""

cates = ['cucumber', 'eggplant', 'mushroom']

class_binary_label = [[1, 0, 0], [0, 1, 0], [0, 0, 1]]

self.train = train

self.transform = transform

self.imgs = []

self.bboxes = []

self.classes = []

for cate_idx in range(3):

if self.train:

for i in range(1, 51):

img, bndbox, class_name = self._get_item(root_dir, cates[cate_idx], i)

bndbox = bndbox / input_dim

self.imgs.append(img)

self.bboxes.append(np.hstack((bndbox, class_binary_label[cate_idx])))

self.classes.append(class_name)

else:

for i in range(51, 61):

img, bndbox, class_name = self._get_item(root_dir, cates[cate_idx], i)

bndbox = bndbox / input_dim

self.imgs.append(img)

self.bboxes.append(np.hstack((bndbox, class_binary_label[cate_idx])))

self.classes.append(class_name)

def __getitem__(self, idx):

img = self.imgs[idx]

if self.transform:

sample = self.transform(img)

else:

sample = img

return sample, torch.Tensor(self.bboxes[idx]).float()

def __len__(self):

return len(self.imgs)

def _get_item(self, root_dir, cate, i):

img_path = os.path.join(root_dir, '%s_%d.jpg' % (cate, i))

img = cv2.imread(img_path)

xml_path = os.path.join(root_dir, '%s_%d.xml' % (cate, i))

x = xmltodict.parse(open(xml_path, 'rb'))

bndbox = x['annotation']['object']['bndbox']

bndbox = np.array(

[float(bndbox['xmin']), float(bndbox['ymin']), float(bndbox['xmax']), float(bndbox['ymax'])])

return img, bndbox, x['annotation']['object']['name']

|

未找到相关的 Issues 进行评论

请联系 @zjykzj 初始化创建