EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks

原文地址:EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks

Pytorch实现:lukemelas/EfficientNet-PyTorch

集成地址:ZJCV/ZCls

摘要

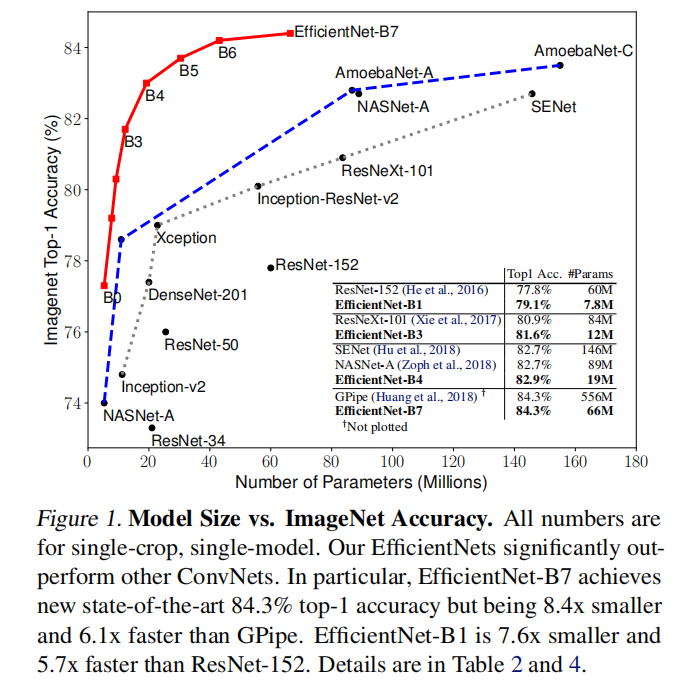

Convolutional Neural Networks (ConvNets) are commonly developed at a fixed resource budget, and then scaled up for better accuracy if more resources are available. In this paper, we systematically study model scaling and identify that carefully balancing network depth, width, and resolution can lead to better performance. Based on this observation, we propose a new scaling method that uniformly scales all dimensions of depth/width/resolution using a simple yet highly effective compound coefficient. We demonstrate the effectiveness of this method on scaling up MobileNets and ResNet.

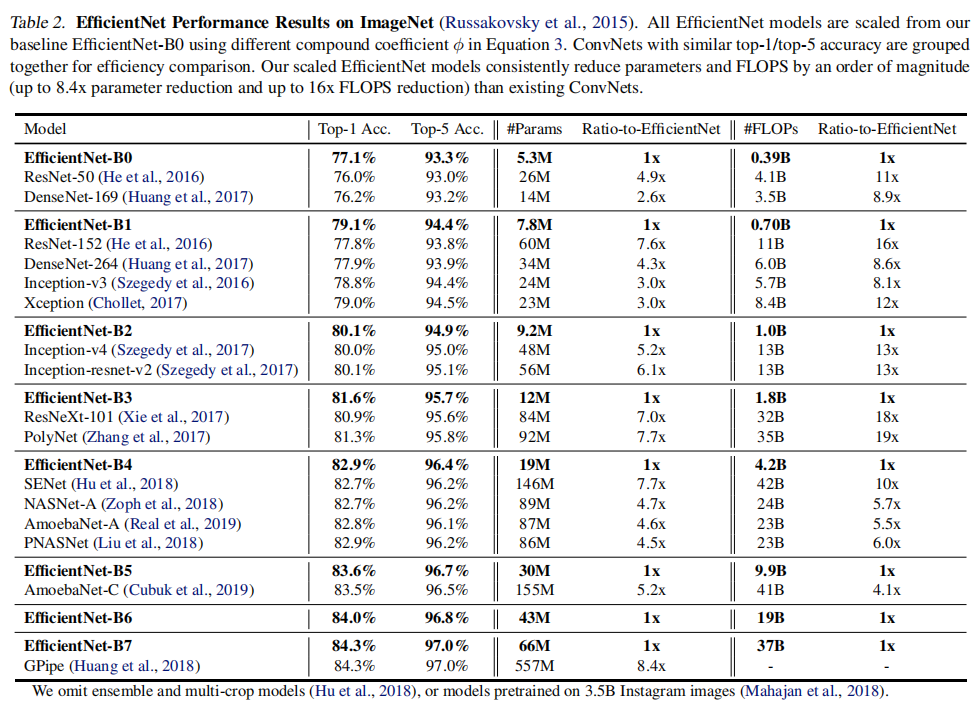

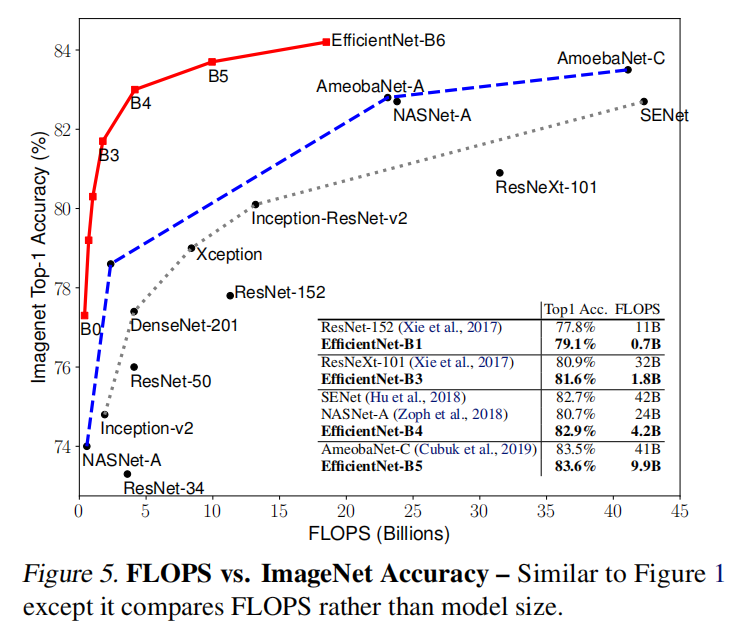

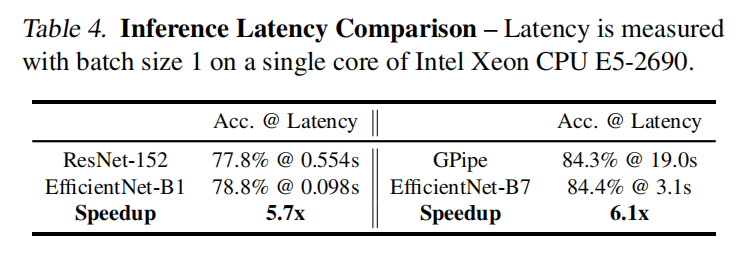

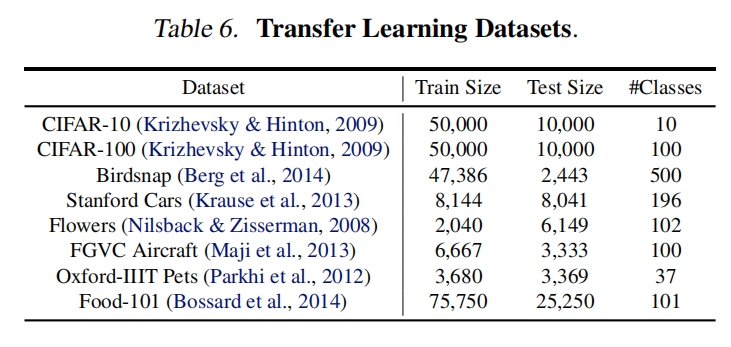

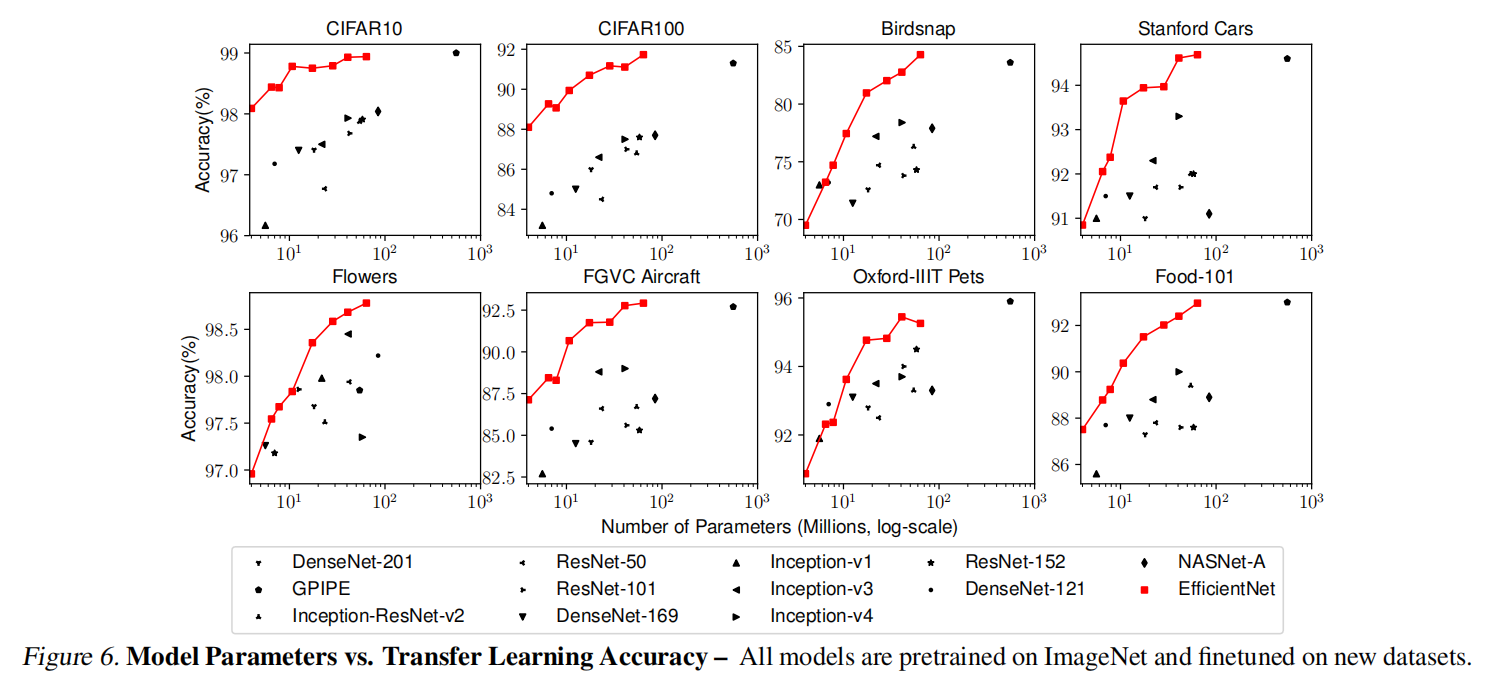

To go even further, we use neural architecture search to design a new baseline network and scale it up to obtain a family of models, called EfficientNets, which achieve much better accuracy and efficiency than previous ConvNets. In particular, our EfficientNet-B7 achieves state-of-the-art 84.3% top-1 accuracy on ImageNet, while being 8.4x smaller and 6.1x faster on inference than the best existing ConvNet. Our EfficientNets also transfer well and achieve state-of-the-art accuracy on CIFAR-100 (91.7%), Flowers (98.8%), and 3 other transfer learning datasets, with an order of magnitude fewer parameters. Source code is at this https URL.

设计卷积神经网络(ConvNets)通常基于固定的资源预算,如果有更多的资源可用,则可以放大以获得更好的精度。论文中我们系统地研究了模型的伸缩性,并且发现通过精心平衡网络的深度、宽度和分辨率可以获得更好的性能。基于这一观察,我们提出了一种新的缩放方法,使用一个简单而高效的复合系数来均匀缩放深度/宽度/分辨率。我们证明了这种方法在扩展MobileNets和ResNet上的有效性。

与此同时,我们使用神经架构搜索技术创建了一个新的基准网络,并将其放大以生成一个模型簇,称之为EfficientNets。该模型比以往网络具有更好的准确率和效率,特别是EfficientNet-B7在ImageNet上达到了最先进的84.3% top-1准确率,同时比现有最好的卷积网络小8.4倍,推理速度快6.1倍。EfficientNets在CIFAR-100(91.7%)、Flowers(98.8%)和其他3个迁移学习数据集上的迁移效果也很好,达到了最先进的准确率,而且参数少了一个数量级。源代码位于https://github.com/tensorflow/tpu/tree/master/models/official/efficientnet。

简介

论文观察到以往卷积网络会通过放大某一个或者几个维度来获取更大更准确的模型,比如

ResNet通过放大更多层数(深度)来扩展ResNet-18到ResNet-200;GPipe将基准模型放大到4倍实现了84.3% ImageNet准确率;- 。。。

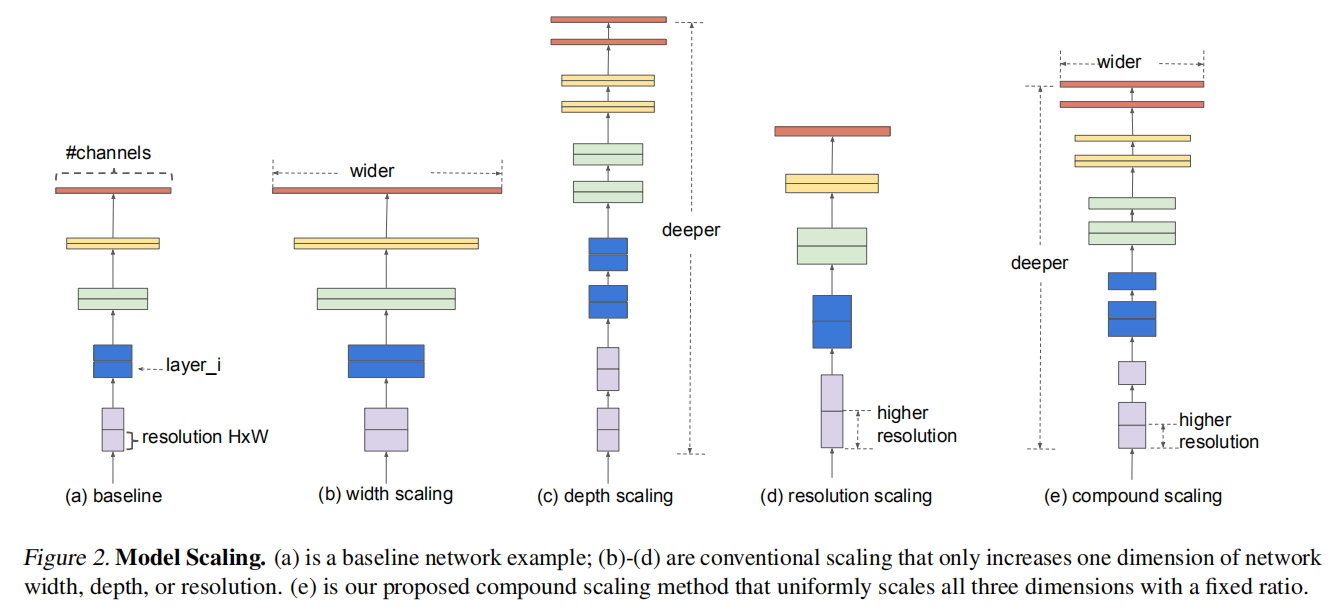

论文总结出放大维度包括了图像分辨率、深度和宽度,并且手动设计了一个复合放大公式(compound scaling method),能够完美的实现这些放大维度的平衡。

直观上来说,综合使用这些放大维度存在合理性:因为往往更大的图像分辨率就需要更深的模型来增加感受野,同时需要更多的通道数来捕获更大图像的细粒度特征。假定拥有

- 网络深度放大到

- 将网络宽度放大到

- 将图像大小放大到

其中EfficientNets。

相关

有相关研究证明网络宽度和深度同等重要:

On the expressive power of deep neural networks. ICML, 2017.Resnet with one-neuron hidden layers is a universal approximator. NeurIPS, pp. 61726181, 2018.On the expressive power of overlapping architectures of deep learning. ICLR, 2018.The expressive power of neural networks: A view from the width. NeurIPS, 2018.

复合模型缩放

问题定义

第

卷积网络

通常情况下,会将卷积层分为几个阶段(stage),每个阶段的卷积层拥有相同的架构,所以上述复合计算可以重新定义如下:

对于传统的卷积网络设计,将重心放在如何实现更强性能的层架构

寻找每层的

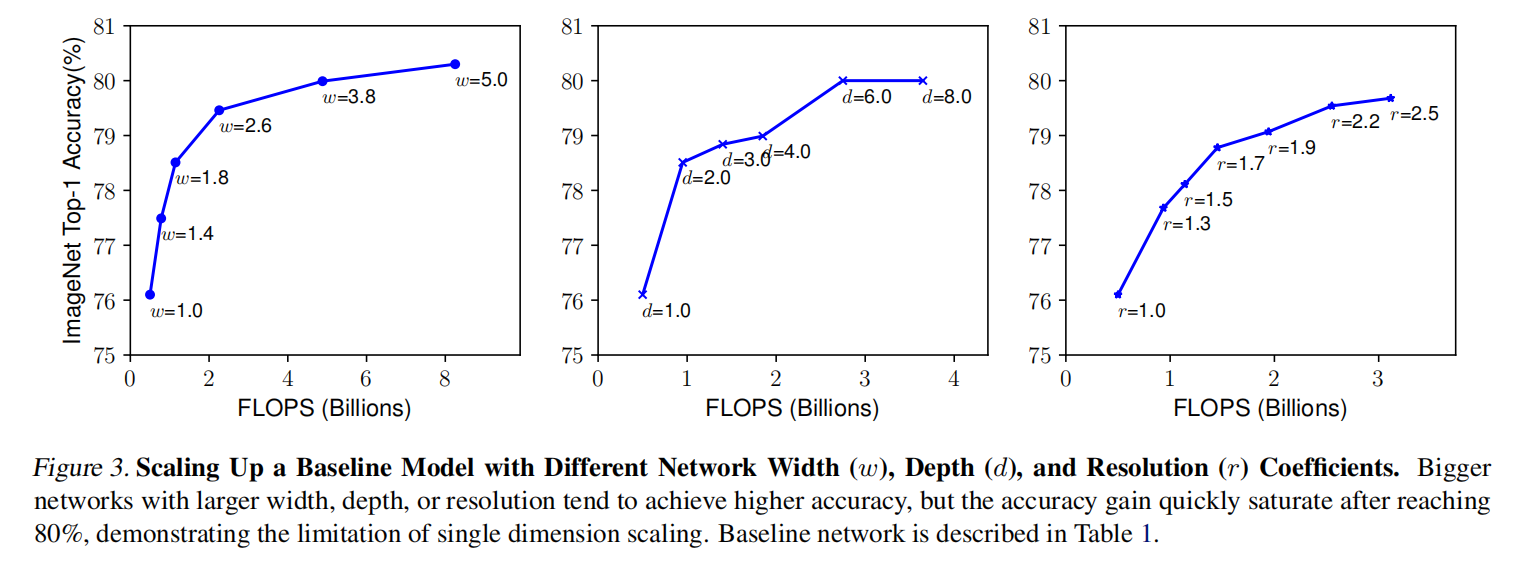

单一缩放

选择合适的缩放维度存在两个难题:

- 系数

- 如何在不同资源限定下改变系数大小。

论文针对单一维度进行放大训练,发现单独放大任一维度(宽度、深度或者分辨率)均可以提升网络性能,但是放大模型越大,准确率增益会越小。

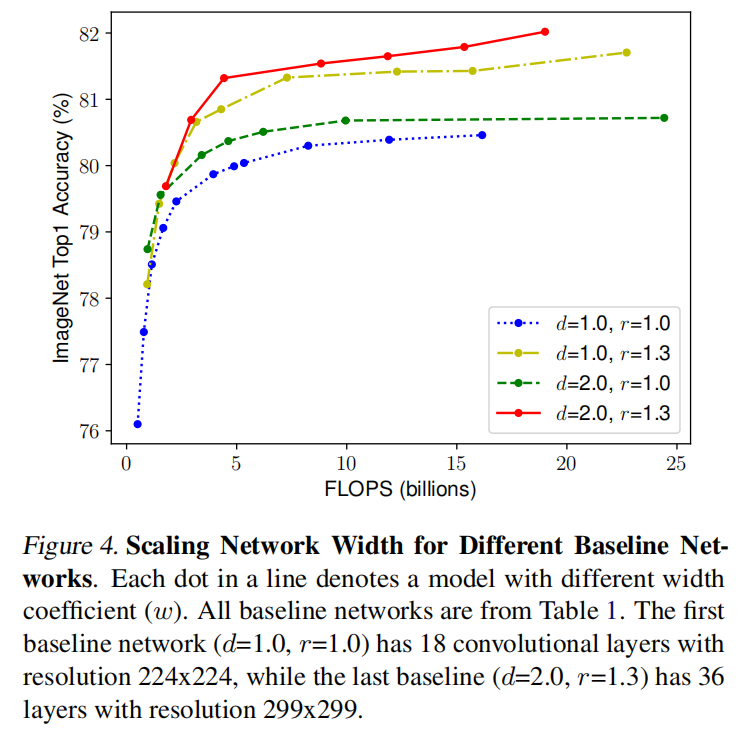

复合缩放

论文也实验了放大多个维度,发现在模型放大过程中合理的设置各个维度的放大,能够实现更好的结果。

论文提出了手动设计的复合放大策略,使用复合系数

通常来说,卷积算子的FLOPS与FLOPS,加倍网络宽度或者分辨率将增加4倍FLOPS。卷积运算通常在网络中占主导地位,所以上述复合缩放公式将等比例的近似增加FLOPS。论文限定了FLOPS。

EfficientNet架构

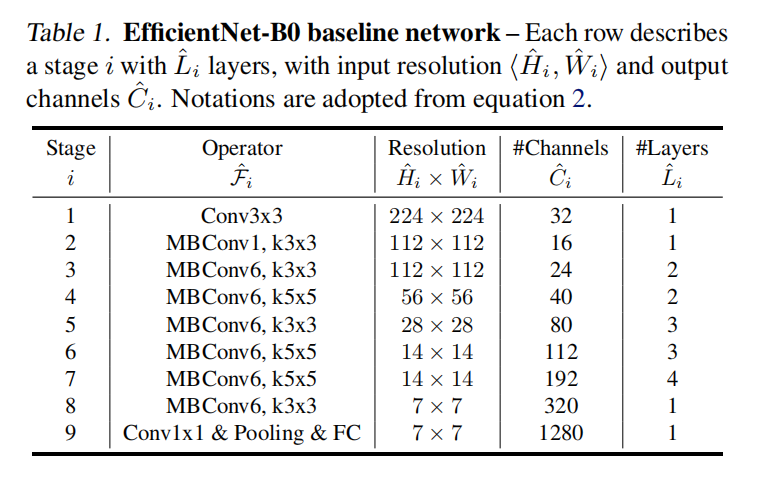

论文通过神经架构搜索算法创造了一个新的基准模型 - EfficientNet-B0。它主要由反向残差块MBConv构成,同时增加了SE注意力层。整体架构如下:

首先固定2倍计算资源以及

实验

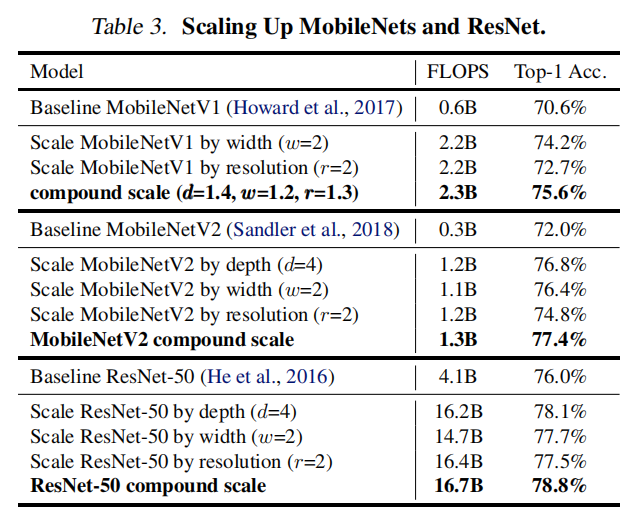

ResNet/MobileNet

论文首先针对已存在的卷积网络ResNet/MobileNet进行了复合放大,通过实验证明同时放大多个维度确实可以更好的提高模型性能

EfficientNet

在ImageNet上训练EfficientNet:

- 优化器:

RMSProp,衰减0.9,动量0.9; - 批量归一化:动量

0.99; - 权重衰减:

1e-5; - 学习率:初始

0.256,每2.4轮衰减0.97; - 激活函数:

SiLU(Swish-1); - 增强:

AutoAugment; - 随机深度(

stochastic depth):存活率0.8; - 随机失活:线性缩放,从

B0的0.2到B7的0.5;

论文还在训练集中随机采集了25k图像作为minival set,在minival set上执行early stop。最后在验证集上进行精度验证。

论文同时验证了模型推理时间的高效:

迁移学习

小结

论文重点:

- 提出复合放大策略;

- 搜索出

EfficientNet-B0架构; - 证明了轻量级算子

MBConv也能够扩展成大模型。

未找到相关的 Issues 进行评论

请联系 @zjykzj 初始化创建