主要内容如下:

- 回归和分类的区别

- 线性回归

- 最小二乘法

- 梯度下降法

回归和分类

回归和分类一样,都是对变量进行预测

回归是对连续型变量进行预测,回归预测建模是指建立输入变量X映射到连续输出变量Y的映射函数f

分类是对离散型或连续型变量进行预测,分类预测建模是指建立输入变量X映射到离散输出变量Y的映射函数f

比如,预测天气温度是回归问题,预测天气是下雨还是晴天就是分类问题

线性回归

线性回归(linear regression)是以线性模型来建模自变量和因变量之间关系的方法

其中是自变量,是因变量,是模型参数

如果自变量只有一个,那么这种问题称为单变量线性回归(或称为一元线性回归);如果自变量表示多个,那么成为多变量线性回归(或称为多元线性回归)

单变量线性回归

单变量线性问题可转换为求解二维平面上的直线问题

模型计算公式如下:

参数集合

在学习过程中,需要判断参数和是否满足要求,即是否和所有数据点接近。使用均方误差(mean square error,简称MSE)来评估预测值和实际数据点的接近程度,模型评估公式如下:

其中表示真实数据,表示估计值,表示损失值

多变量线性回归

多变量线性回归计算公式如下:

参数表示有个等式,参数表示每一组变量有个参数。设,计算公式如下:

此时每组参数个数增加为,其向量化公式如下:

其中

同样使用均方误差作为损失函数

最小二乘法

利用最小二乘法(least square method)计算线性回归问题的参数,它通过最小化误差的平方和来求取目标函数的最优值,这样进一步转换为求取损失函数的最小值,当得到最小值时,参数偏导数一定为0

有两种方式进行最小二乘法的计算,使用几何方式计算单变量线性回归问题,使用矩阵方式计算多变量线性回归问题

几何计算

当得到最小值时,和的偏导数一定为0,所以参数和的计算公式如下:

最终得到的和的计算公式如下:

- 参数表示真实结果的均值

- 参数表示输入变量的均值

- 参数表示输入变量和真实结果的乘积的均值

- 其他变量以此类推

矩阵计算

基本矩阵运算如下:

矩阵求导如下:

对多变量线性线性回归问题进行计算,

其中,是的转置,计算结果均为的标量,所以大小相等,上式计算如下:

求解

必须是非奇异矩阵,满足,才能保证可逆

对于矩阵的秩,有以下定理

- 对于阶矩阵,当且仅当时,,称为满秩矩阵

- 设为矩阵,则

所以矩阵的秩需要为(通常样本数量大于变量数量)时,才能保证能够使用最小二乘法的矩阵方式求解线性回归问题

示例

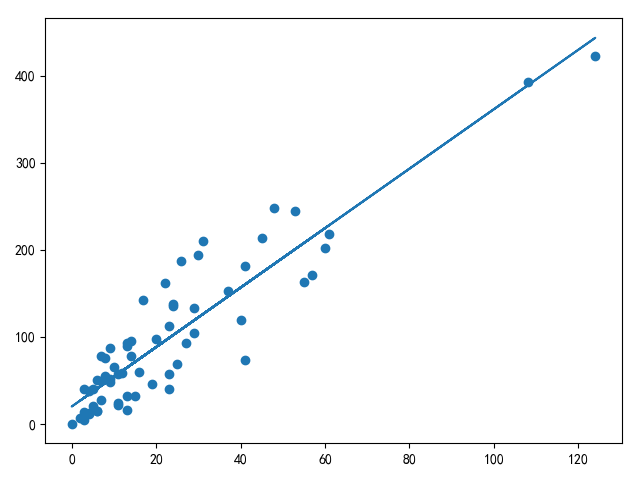

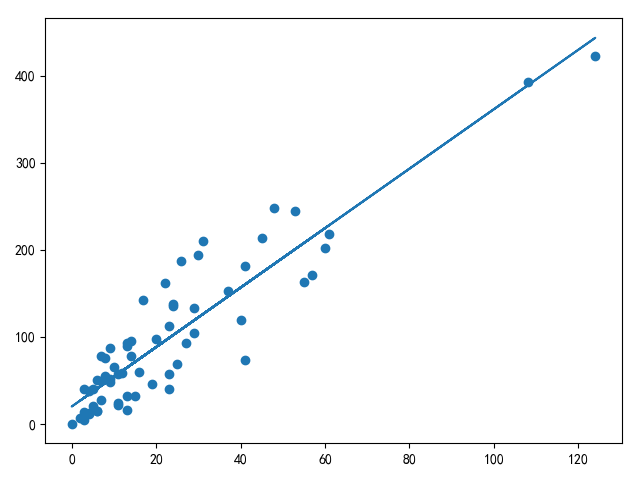

单边量线性回归测试数据参考[线性回归最小二乘法和梯度下降法]的瑞典汽车保险数据集

多变量线性回归测试数据参考coursera的ex1data2.txt

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

|

"""

最小二乘法计算线性回归问题

"""

from mpl_toolkits.mplot3d import Axes3D

import matplotlib.pyplot as plt

import numpy as np

def load_sweden_data():

"""

加载单变量数据

"""

path = '../data/sweden.txt'

res = None

with open(path, 'r') as f:

line = f.readline()

res = np.array(line.strip().split(' ')).reshape((-1, 2))

x = []

y = []

for i, item in enumerate(res, 0):

item[1] = str(item[1]).replace(',', '.')

x.append(int(item[0]))

y.append(float(item[1]))

return np.array(x), np.array(y)

def load_ex1_multi_data():

"""

加载多变量数据

"""

path = '../data/coursera2.txt'

datas = []

with open(path, 'r') as f:

lines = f.readlines()

for line in lines:

datas.append(line.strip().split(','))

data_arr = np.array(datas)

data_arr = data_arr.astype(np.float)

X = data_arr[:, :2]

Y = data_arr[:, 2]

return X, Y

def least_square_loss_v1(x, y):

"""

最小二乘法,几何运算

"""

X = np.array(x)

Y = np.array(y)

muX = np.mean(X)

muY = np.mean(Y)

muXY = np.mean(X * Y)

muXX = np.mean(X * X)

w1 = (muXY - muX * muY) / (muXX - muX ** 2)

w0 = muY - w1 * muX

return w0, w1

def least_square_loss_v2(x, y):

"""

最小二乘法,矩阵运算

"""

extend_x = np.insert(x, 0, values=np.ones(x.shape[0]), axis=1)

w = np.linalg.inv(extend_x.T.dot(extend_x)).dot(extend_x.T).dot(y)

return w

def compute_single_variable_linear_regression():

x, y = load_sweden_data()

w0, w1 = least_square_loss_v1(x, y)

y2 = w1 * x + w0

plt.scatter(x, y)

plt.plot(x, y2)

plt.show()

def compute_multi_variable_linear_regression():

x, y = load_ex1_multi_data()

w = least_square_loss_v2(x, y)

print(w)

if __name__ == '__main__':

compute_multi_variable_linear_regression()

|

适用范围

最小二乘法直接进行计算就能求出解,操作简洁,最适用于计算单变量线性回归问题

而对于多变量线性回归问题,使用最小二乘法计算需要考虑计算效率,因为的逆矩阵计算代价很大,同时需要考虑可逆问题,所以更推荐梯度下降算法来解决多变量线性回归问题

小结

本文学习了线性回归模型,利用最小二乘法(最小化误差的平方和)实现单边量/多变量线性数据的训练和预测

在训练过程中,线性回归模型使用线性映射进行前向计算,利用均方误差方法进行损失值的计算

- 对于单变量线性回归问题,适用于最小二乘法的几何计算

- 对于多变量线性回归问题,如果变量维数不大同时满足且的情况,使用最小二乘法的矩阵计算;否则,利用梯度下降方式进行权重更新

线性回归模型更适用于回归问题,可以使用逻辑回归模型进行分类

相关阅读

未找到相关的 Issues 进行评论

请联系 @zjykzj 初始化创建